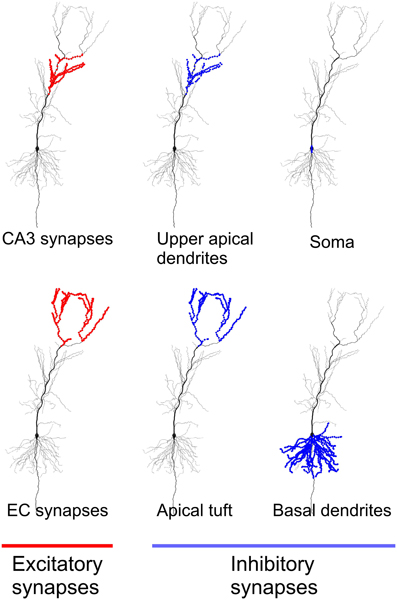

It appears that the voltage waves recorded in the EEG represent the summation of synaptic potentials in the apical dendrites of pyramidal cells in the cortex. They are so named because of the characteristic large apical dendrite, giving them a pyramidal shape. We obtain further performance improvements when we change the standard model of the neuron with our pyramidal neuron with apical dendrite activations (PyNADA). Apical dendrite Pyramidal neurons are the principal long-projecting cells of the cerebral cortex and hippocampus. one-hidden-layer or two-hidden-layer multi-layer perceptrons (MLPs) and convolutional neural networks (CNNs) such as LeNet, VGG, ResNet and Character-level CNN. The average human apical dendrite is approximately twice the length of a rat’s, so the number of dendritic spines present on a human apical dendrite could be as high as 6000. The typical apical dendrite in a rat has at least 3000 dendritic spines. MOROCO, UTKFace, CREMA-D, Fashion-MNIST, and Tiny ImageNet, showing that ADA and the leaky ADA functions provide superior results to Rectified Linear Units (ReLU), leaky ReLU, RBF and Swish, for various neural network architectures, e.g. Dendritic spines are absent on the soma, and the number of spines increases away from it. Furthermore, we conduct experiments on five benchmark data sets from computer vision, signal processing and natural language processing, i.e. Furthermore, recent mounting evidence suggest that directed mechanisms might mediate bipolar polarity/leading process and subsequent apical dendrite development. We show that a standard neuron followed by the novel apical dendrite activation (ADA) can learn the XOR logical function with 100\% accuracy. Nevertheless, the establishment of bipolar polarity and the leading edge, and apical dendrite development in pyramidal neurons in vivo occur when axon formation is prevented. Apical dendrites of Betz cells are important sites for the integration of cortical input, however their health has not been fully assessed in ALS patients. Inspired by some recent discoveries in neuroscience, we propose a new neuron model along with a novel activation function enabling the learning of non-linear decision boundaries using a single neuron. In order to classify linearly non-separable data, neurons are typically organized into multi-layer neural networks that are equipped with at least one hidden layer.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed